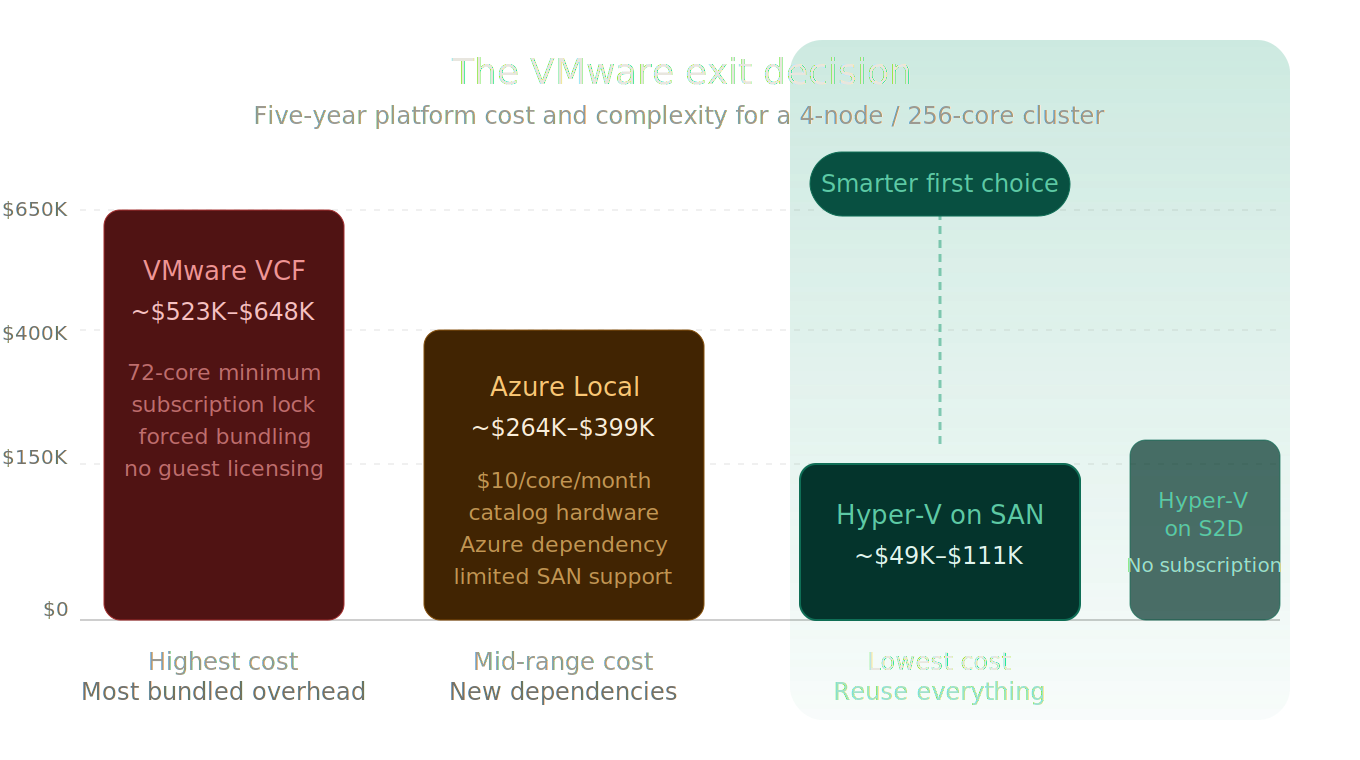

Hyper-V Is Still the Smarter First Choice

The operator's case for challenging the assumption that VCF or Azure Local should be the starting point for every VMware exit.

Azure Local is not the default VMware exit path. Neither is VMware Cloud Foundation the unquestioned benchmark it was two years ago. And yet the industry keeps framing the VMware exodus as a binary choice: stay and pay, or move to Microsoft’s preferred Azure-connected platform. Both options serve somebody’s agenda. Neither starts from the question that actually matters to infrastructure operators: what do I need, and what’s the cheapest way to get it without creating new dependencies?

For many organizations running primarily Windows workloads (the ones with 50, 100, 500 VMs on existing servers and SANs), the answer to that question is the same answer it has been for a decade. It’s Hyper-V. Not Azure Local. Not VCF. Hyper-V, running on Windows Server 2025, backed by the storage infrastructure you already own, managed by the tools you already know.

When you say you are leaving VMware, every VAR, every Microsoft partner, every migration specialist, and yes, Microsoft’s own sales and pre-sales teams point you in the same direction. Azure first. Then Azure VMware Solution. Then Azure Local. Hyper-V on Windows Server is the option that gets mentioned last, quietly, usually only after you push back on cost. Not because it cannot do the job. Because there is no recurring subscription attached to recommending it. That is true whether the conversation is with a reseller or with the Microsoft account team sitting across the table.

The irony is that Microsoft’s own documentation says something different than the sales motion. As I noted earlier in this series, Azure Local’s “primary focus is on edge and distributed environments.” Their own Compare Azure Local to Windows Server page points customers to Windows Server for SAN-backed storage, broad hardware compatibility, and traditional Hyper-V hosting. That is Microsoft’s engineers talking. This post makes the case the sales conversation skips.

Why This Post Exists

Two narratives dominate the VMware exit conversation right now, and both are designed to benefit someone other than the infrastructure team making the decision.

The VMware Narrative: Pay Up or Leave

Broadcom’s $69 billion acquisition of VMware in November 2023 (enterprise value including assumed debt) has fundamentally reshaped the virtualization market. The numbers tell the story:

- Cost increases of 150% to over 1,000% reported in extreme cases; CloudBolt’s 2026 CII study found 59% of customers experienced increases over 25%, with 14% exceeding 100% (CloudBolt CII Reality Report, January 2026)

- 72-core minimum per socket, effective April 2025, whether your CPUs have 16 cores or 72, you pay for 72

- 99% of VMware customers expressed concern about the acquisition’s impact in 2024; 85% remain concerned about future price increases in 2026 (CloudBolt CII Reality Report, January 2026, 302 IT decision-makers surveyed)

- Industry-reported partner reduction from 4,500+ authorized partners to 12 Pinnacle and ~300 Premier in the US, decimating the support and services ecosystem

- Widely reported late renewal penalties of up to 25% if subscriptions aren’t renewed by the anniversary date

What changed under Broadcom is not just pricing. It’s the entire purchasing model:

- Perpetual licenses eliminated. All customers forced to subscription-only

- Industry-reported ~8,000 VMware SKUs consolidated to a handful of bundles. VCF 9 forces NSX + vSAN whether you need them or not

- VCF 9 deprecates older CPUs (Ivy Bridge, Haswell, Broadwell, and earlier), forcing hardware refresh even if your servers are perfectly functional

- VCF 9’s management stack alone requires 48 vCPUs, 194 GB RAM, and 3.2 TB of provisioned storage for a Simple (single-node) deployment, and ~118 vCPUs, ~473 GB RAM, and ~5.7 TB for an HA (production) deployment where NSX Manager, VCF Operations, and VCF Automation are clustered at three nodes each (source: William Lam, Distinguished Platform Engineering Architect, VCF Division at Broadcom). VCF Automation alone in HA consumes 72 vCPUs and 288 GB RAM.

- OEMs are choosing NOT to re-certify older platforms for VCF, narrowing the supported hardware base

The message from Broadcom is clear: pay massively more for a stack you may not fully need, refresh your hardware whether or not it’s end-of-life, and accept that the partner ecosystem you relied on has been gutted. Customers face a forced decision: absorb massive increases or migrate.

The Azure Local Narrative: The “Natural” Microsoft Landing Zone

Microsoft’s counter-narrative positions Azure Local as the obvious next step for VMware refugees. The pitch is compelling on paper: Azure-connected management, Arc integration, a validated HCI design. But “compelling on paper” and “the right first choice for your environment” are not the same thing.

Azure Local carries its own baggage:

- $10/physical core/month subscription, a recurring platform rent that never stops

- Validated catalog hardware required. You cannot simply reuse your existing VMware servers unless they happen to appear in the Azure Local catalog, and validated nodes typically run $200K–$500K+ for a 4-node deployment

- S2D local disks only for storage (FC SAN support in limited preview as of November 2025, with Dell PowerFlex and Pure Storage FlashArray as initial preview partners. No iSCSI SAN support)

- Azure connectivity required. If connectivity is lost, existing VMs continue running normally but new VMs cannot be created until Azure Local syncs again (reduced functionality mode). Microsoft also offers a Disconnected operations option that brings the control plane on-premises for permanently disconnected scenarios (separate commercial arrangement).

- No stretch cluster support since 23H2 (2311 release). Within a single site, rack-aware fault domain configuration is in preview, allowing node placement across physical racks for rack-level fault tolerance.

- No Azure-Local-to-Azure-Local VM replication. The symmetric site-to-site DR story many buyers assume exists simply does not

This post exists to break the frame. It takes the capabilities that VCF bundles together, maps them across Azure Local, Hyper-V on SAN-backed failover clustering, and Hyper-V on Storage Spaces Direct, and then asks the question that vendor pitch decks never ask: which of these actually matches what your environment needs, at the lowest real cost, with the least forced change?

How to Read This Comparison

Before the tables start, some terminology matters.

Azure Local uses the same underlying Windows Server virtualization engine (Hyper-V and Windows Server Failover Clustering) as traditional Hyper-V deployments. It is not a different hypervisor. The difference is the operating model. Azure Local packages that foundation into an Azure-connected platform with Azure portal, Azure CLI, and PowerShell-driven management, Arc resource bridge integration, and a per-core subscription billing model.

When this post says “Hyper-V” as a migration target, it means traditional Windows Server 2025 failover clustering running the Hyper-V role in a customer-controlled design, split into two distinct architectures:

- Hyper-V on SAN: Windows Server Failover Clustering with external shared storage (Fibre Channel, iSCSI, or SMB3). Compute and storage scale independently. Existing enterprise arrays are fully supported.

- Hyper-V on S2D: Windows Server Failover Clustering with Storage Spaces Direct providing clustered local storage. A Microsoft-native HCI design that does not require Azure connectivity or subscription billing.

These two columns are separated throughout this post because they solve different problems. Hyper-V on SAN preserves existing storage investments and gives the broadest hardware flexibility. Hyper-V on S2D offers a software-defined storage answer without Azure Local’s platform overhead. Lumping them together hides the most important decision many operators will face.

| Platform | Storage Model | Management Model | Billing Model |

|---|---|---|---|

| VMware VCF | vSAN (bundled) or external SAN | vCenter, VMware NSX, VCF Operations (VCF 9) | Per-core subscription, 72-core minimum per socket |

| Azure Local | Storage Spaces Direct (primary), FC SAN in limited preview | Azure portal, Azure CLI, PowerShell, Arc | $10/core/month subscription |

| Hyper-V on SAN | External SAN (FC, iSCSI, SMB3) | SCVMM, WAC, FCM, PowerShell, Arc-enabled | Windows Server perpetual + optional SA |

| Hyper-V on S2D | Storage Spaces Direct (local disks) | SCVMM, WAC, FCM, PowerShell, Arc-enabled | Windows Server perpetual + optional SA |

This Isn’t Your 2012 Hyper-V

Before we compare platforms, let’s reset the baseline. Windows Server 2025 Hyper-V is the same hypervisor that powers Microsoft Azure, running the world’s largest workloads. The specifications speak for themselves:

| Capability | Hyper-V 2025 (Gen 2) | VMware VCF 9 | Advantage |

|---|---|---|---|

| Max vCPUs per VM | 2,048 | 960 | Hyper-V — 2.1x more compute per VM |

| Max RAM per VM | 240 TB | 24 TB | Hyper-V — 10x more memory per VM |

| Max Cluster Nodes | 64 (WSFC + SAN) | 64 (vSAN cluster — the only option in VCF 9, where vSAN is mandatory) / 16 (Azure Local) | Hyper-V on SAN matches the applicable VCF 9 vSAN cluster max; exceeds Azure Local by 4x |

| Max VMs per Cluster | 8,000 | 8,000 | Tie |

| GPU Partitioning (GPU-P) | Native in Windows Server 2025. Live migration with GPU-P requires NVIDIA vGPU Software v18.x+ (commercial license). | Requires NVIDIA vGPU licensing for GPU passthrough and vMotion. | Hyper-V — native GPU-P at OS level; both platforms require NVIDIA licensing for live migration with GPU. |

| Storage Replica | Built-in sync/async block replication | No equivalent without vSAN stretch | Hyper-V — included free |

| Network ATC | Declarative NIC teaming, RDMA, QoS, VLAN | VMware NSX (bundled and mandatory in VCF 9) | Hyper-V — automated network intent |

Also included in Windows Server 2025 Datacenter at no extra cost:

Live Migration (zero-downtime VM moves), Cluster Shared Volumes (CSV), Hyper-V Replica (VM-level DR), Storage Spaces Direct (HCI option), Shielded VMs / VBS / Credential Guard, Workgroup clusters (no AD required), Windows Admin Center: Virtualization Mode (vMode) (FREE, Public Preview), S2D thin provisioning, accelerated disk resync, cloud witness for quorum, and unlimited Windows Server guest VMs.

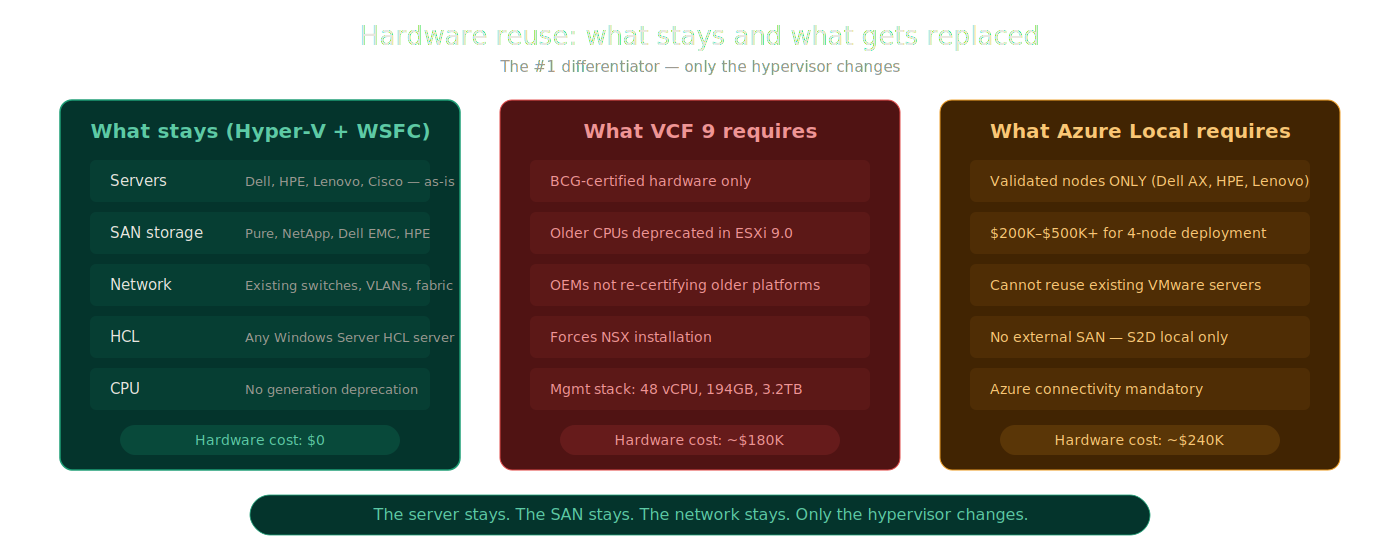

No Hardware Refresh Required

This is the #1 differentiator. Customers keep their existing servers, existing SAN, existing network. Only the hypervisor changes.

What stays with Hyper-V + WSFC:

- Servers: Dell PowerEdge, HPE ProLiant, Lenovo ThinkSystem, Cisco UCS. All work as-is.

- SAN Storage: Pure Storage, NetApp, Dell EMC, HPE. Any FC/iSCSI/SMB3 SAN works with CSV.

- Network: Existing switches, VLANs, fabric. No RDMA required (nice-to-have, not mandatory)

- HCL: Any x64 server on the Windows Server HCL, far broader than BCG or Azure Local validated nodes

- No CPU restrictions: No generation deprecation like ESXi 9.0

What VCF 9 and Azure Local require:

VMware VCF 9: BCG-certified hardware only. Older CPUs deprecated. OEMs choosing NOT to re-certify older platforms. Forces NSX installation regardless of need. Management overhead alone consumes 48 vCPUs, 194 GB RAM, 3.2 TB storage in a Simple deployment, scaling to ~118 vCPUs, ~473 GB RAM, ~5.7 TB in a production HA configuration.

Azure Local: Hardware must be listed in the Azure Local Catalog to receive Microsoft support. The catalog has three tiers: Validated Nodes (individual servers), Integrated Systems (pre-configured complete systems), and Premier Solutions (premium pre-validated systems). Catalog vendors include Dell, HPE, Lenovo, DataON, Supermicro, Bluechip, Thomas-Krenn, primeLine, and others. $200K–$500K+ for typical 4-node Premier Solution or Integrated System deployment. Cannot reuse existing VMware server hardware unless it appears in the catalog. No external SAN support (S2D local disks only, FC SAN in limited preview).

The server stays. The SAN stays. The network stays. Only the hypervisor changes.

Hardware reuse: Hyper-V vs VCF vs Azure Local

Hardware reuse: Hyper-V vs VCF vs Azure Local

The Master VCF Capability Map

This is the core comparison. Every row starts from a VCF capability or assumption, maps it to Azure Local and both Hyper-V architectures, and gives a blunt verdict.

VM Lifecycle and Mobility

| VCF Capability | Why It Matters | Azure Local | Hyper-V on SAN | Hyper-V on S2D | Verdict |

|---|---|---|---|---|---|

| VM provisioning and templates | Repeatable, fast deployments reduce manual drift | ARM templates, Bicep, Terraform via Arc resource bridge. Azure portal VM creation. | SCVMM service templates, VM templates in library. WAC VM creation. PowerShell New-VM. | Same as SAN column—SCVMM, WAC, PowerShell. | All platforms deliver workable VM provisioning. VCF may feel more polished through vCenter. Hyper-V answers depend on which management tool you invest in. |

| Live migration | Non-disruptive host maintenance, load balancing | Fully supported within cluster. | Fully supported. Compression, RDMA offload, shared-nothing options in WS2025. | Fully supported within S2D cluster. | Near parity. Live migration is mature across all platforms. Not a VCF-exclusive advantage. |

| Host maintenance workflows | How disruptive is patching and hardware work? | Lifecycle Manager via Azure portal or PowerShell only. CAU is explicitly unsupported on Azure Local — Microsoft lists “Manual runs of Cluster-Aware Updating” in the unsupported interfaces for updates. | Cluster-Aware Updating (CAU). Drain, patch, resume. SCVMM can orchestrate. | Same as SAN—CAU plus optional SCVMM orchestration. | VCF lifecycle manager is more integrated. Hyper-V CAU is entirely functional but less elegant. All platforms achieve rolling maintenance without VM downtime. |

| Storage migration for running VMs | Moving workloads without forcing all storage decisions on day one | Not applicable. | Strongest answer. Storage Live Migration moves VHDs between CSVs, LUNs, or SMB shares with zero downtime. Array-native tools add further flexibility. Workloads can land on interim storage and migrate later. | Not applicable. | Hyper-V on SAN wins decisively. Azure Local has no storage migration capability by design — its HCI architecture makes the concept meaningless. Hyper-V on SAN gives you full freedom to move data between storage targets without downtime. |

| VMware-to-target migration | How fast and clean is the exit from VMware? | Azure Migrate supported. Must land on S2D or preview FC SAN. Hardware must be Azure Local validated. | SCVMM V2V, third-party tools (Veeam, Zerto, Carbonite). Land on any supported SAN target. No hardware catalog restriction. | SCVMM V2V, third-party tools (Veeam, Zerto). Land on S2D storage. | Hyper-V on SAN is the easiest first landing zone when preserving storage optionality matters. No hardware catalog lock. No per-core subscription on day one. |

Storage and Data Services

| VCF Capability | Why It Matters | Azure Local | Hyper-V on SAN | Hyper-V on S2D | Verdict |

|---|---|---|---|---|---|

| External SAN support | Preserving existing storage investments | Limited. FC SAN in preview (Nov 2025, announced at Microsoft Ignite). Initial preview partners: Dell PowerFlex and Pure Storage FlashArray. No iSCSI SAN. | Native strength. FC, iSCSI, SMB3 all fully supported. Any enterprise array with Windows Server certification works. | Not the design center—S2D uses local disks. | Major differentiator for Hyper-V on SAN. If you own a SAN today, Hyper-V uses it. Azure Local mostly cannot. |

| HCI / local storage | All-local-disk simplicity | Native design center. S2D with Azure-managed lifecycle. | Not the primary design center. | Native S2D—same technology as Azure Local, without Azure subscription or connectivity. | Azure Local and Hyper-V on S2D are the real peers here. Both use S2D. The difference is operating model and cost. |

| Storage protocol flexibility | Interoperability, vendor choice, migration options | S2D internal (SMB3 between nodes). FC SAN in preview. No iSCSI SAN. | FC, iSCSI, SMB3. Full enterprise storage ecosystem. | S2D internal. ReFS or NTFS on CSVs. | Hyper-V on SAN scores highest on protocol flexibility. Azure Local’s storage story is narrow by design. |

| Storage expansion | How painful is adding capacity? | Add drives to existing nodes or add new nodes (compute + storage together). S2D rebalances across the cluster. | Independent scaling. Add storage without compute. Add compute without storage. | Add drives to existing nodes or add new nodes. S2D rebalances across the cluster. | Hyper-V on SAN wins on independent scaling. Both HCI platforms (Azure Local and Hyper-V on S2D) can add drives or nodes, but always tie compute and storage expansion together. |

| Array-native migration tooling | Moving data smarter than the hypervisor can | Not applicable—S2D is internal. FC SAN preview too early for tooling. | Strong advantage. Pure Storage, NetApp, Dell PowerStore, HPE Alletra all offer array-native data mobility for Hyper-V. | Not applicable—storage is internalized. | This row strongly reinforces the SAN argument. Customers with enterprise arrays keep proven data mobility. |

| Array-native replication and DR | Preserving proven storage-side DR patterns | Not applicable for S2D. FC SAN preview too new for replication. | Strong advantage. Array-native sync and async replication works alongside Hyper-V Replica and Storage Replica. | Storage Replica (volume-level, sync or async). No array-native option. | Hyper-V on SAN preserves mature storage DR investments. |

BCDR and Multi-Site Resilience

| VCF Capability | Why It Matters | Azure Local | Hyper-V on SAN | Hyper-V on S2D | Verdict |

|---|---|---|---|---|---|

| Hypervisor-level replication | VM replication without storage dependency | Hyper-V Replica is technically available at the OS level (documented for Azure Local 2311.2+), but it is not integrated into Azure Local’s management plane, not exposed through Azure portal, and not part of Azure Local’s supported operational model. No Azure-portal-managed Azure-Local-to-Azure-Local VM replication exists. | Hyper-V Replica. Async VM replication. 30-second to 15-minute intervals. Free. | Hyper-V Replica. Same capabilities. | Hyper-V wins clearly. Hyper-V Replica provides simple, free, built-in VM DR that is fully integrated into the management experience. Azure Local has it at the OS level but not operationally. |

| Storage-level replication | Site resilience, planned migration, failback | Not applicable. Azure Local uses S2D with no external storage target and no stretch cluster support since 23H2. No storage-level replication exists. | Broadest choice. Storage Replica + array-native replication (Pure Storage ActiveCluster, NetApp MetroCluster, Dell PowerMax SRDF, HPE Peer Persistence). Dual replication layers. | Storage Replica (sync/async). No array-native option since storage is internalized. | Hyper-V on SAN offers the deepest replication toolkit. Hyper-V on S2D has Storage Replica. Azure Local has nothing at this layer. |

| Campus / multi-site clustering | Near-metro, campus, or two-datacenter resilience | No stretch cluster support since 23H2 (2311 release). This is a confirmed, documented breaking change from Azure Local. Within a single site, rack-aware fault domains allow node placement across physical racks for rack-level fault tolerance. | Mature WSFC multi-site (stretched) cluster support on Windows Server. Site-aware failover policies prefer the local site under normal conditions and fail over to the secondary site on failure. Backed by a shared FC or iSCSI SAN with synchronous replication, or a dual-fabric active/active SAN. Supported since WS2016, current through WS2025. Microsoft’s official term is “stretched cluster” or “multi-site cluster”—“campus cluster” is informal industry usage. | S2D-backed stretched cluster using Storage Replica for cross-site volume replication. Supported in Windows Server 2019 with S2D and later. Requires planning for network latency, quorum witness placement, and post-failover rebalancing. Windows Server only. Not available in Azure Local. | Windows Server Hyper-V (both SAN and S2D) is the only current Microsoft platform with a documented and supported multi-site cluster story. Azure Local dropped this capability at 23H2. |

| Site failover and failback | How recovery actually works | ASR (Azure-directed only). No native site-to-site failover between Azure Local instances. | Hyper-V Replica failover. Storage Replica failover. SAN-native failover. ASR. Multiple options. | Hyper-V Replica failover. Storage Replica failover. ASR. | Hyper-V offers more on-prem-to-on-prem recovery flexibility. Azure Local pushes toward Azure-directed recovery. |

| Azure-Local-to-Azure-Local replication | Many buyers assume this exists | Gap. No native Azure-Local-to-Azure-Local VM replication. This is the most misunderstood assumption in the market. | Not applicable—Hyper-V uses Hyper-V Replica and SAN-native replication. | Not applicable. | This gap exposes a key Azure Local assumption. Hyper-V already solves site-to-site with Hyper-V Replica. |

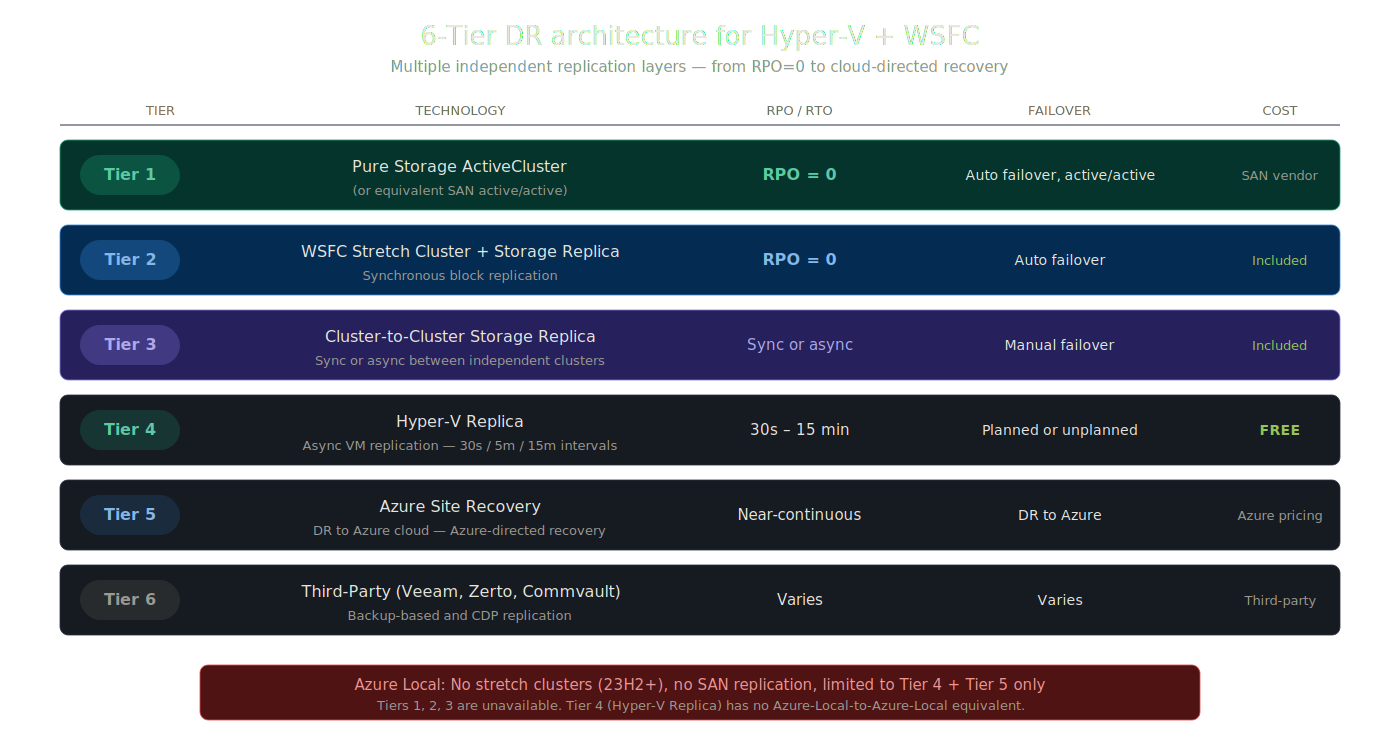

6-Tier DR architecture available to Hyper-V on SAN

6-Tier DR architecture available to Hyper-V on SAN

The 6-Tier DR Architecture

When Hyper-V is backed by enterprise SAN storage, the DR options are not just adequate. They are enterprise-grade and multi-layered. Here’s the full resilience stack available to Hyper-V + WSFC deployments:

| Tier | Technology | RPO | Failover Type | Cost |

|---|---|---|---|---|

| Tier 1 | Pure Storage ActiveCluster (or equivalent SAN active/active) | RPO = 0 | Automatic failover, active/active | SAN vendor licensing |

| Tier 2 | WSFC Stretch Cluster + Storage Replica | RPO = 0 (synchronous) | Automatic failover | Included in Datacenter |

| Tier 3 | Cluster-to-Cluster Storage Replica | Sync or async | Manual failover | Included in Datacenter |

| Tier 4 | Hyper-V Replica | 30s / 5m / 15m intervals | Planned or unplanned | FREE — built into Windows Server |

| Tier 5 | Azure Site Recovery | Near-continuous | DR to Azure cloud | Azure consumption pricing |

| Tier 6 | Third-Party (Veeam, Zerto, Commvault) | Varies | Varies | Third-party licensing |

Azure Local’s DR story by comparison: No stretch clusters (23H2+), no SAN replication, limited to ASR + Hyper-V Replica only. No Tier 1, 2, or 3 options available.

Pure Storage Integration: A Concrete Example

The plan called for a concrete SAN example, and Pure Storage is one of the strongest:

- FlashArray //X certified for Windows Server: full MPIO, ODX, WAC extension, PowerShell SDK

- ActiveCluster: Synchronous active/active, RPO=0, zero downtime failover between sites

- ActiveDR: Near-synchronous asynchronous replication, RPO < 60 seconds

- Data mobility: Array-native migration between FlashArrays moves data at the storage layer, faster and less risky than hypervisor-level migration

- No backup limitations: Full integration with Veeam, Commvault, and native Windows Server Backup

This kind of deep storage integration is exactly what Hyper-V on SAN preserves and what Azure Local cannot leverage. Customers running Pure Storage, NetApp, Dell EMC, or HPE SANs today keep their entire data services stack intact when they move to Hyper-V.

Management and Day-2 Operations

| VCF Capability | Why It Matters | Azure Local | Hyper-V on SAN | Hyper-V on S2D | Verdict |

|---|---|---|---|---|---|

| Central management plane | VMware’s strongest area | Azure portal + CLI + PowerShell. WAC via Arc in preview. No rich local console. | SCVMM (closest vCenter equivalent). WAC for lighter ops. FCM for cluster. PowerShell for everything. Arc-enabled SCVMM extends to Azure. | Same tool set as SAN. | VMware still leads on single-pane polish. Azure Local pushes to cloud. Hyper-V requires tool-layer decisions. |

| Multi-cluster operations | Beyond a single cluster | Azure portal fleet-level view across Arc-registered instances. | SCVMM multi-cluster management. Arc-enabled SCVMM extends estate to Azure. Cluster Sets group multiple clusters. | Same as SAN. | Azure Local has the Azure-connected fleet story. Hyper-V with SCVMM has the on-prem estate story. |

| Patching and lifecycle | Downtime planning, compliance | Azure Local Lifecycle Manager via portal. | CAU for rolling host updates. SCVMM can coordinate. WSUS/SCCM for broader patching. | Same as SAN. | VCF and Azure Local more opinionated. Hyper-V CAU is functional but less unified. |

| Monitoring | Usable telemetry | Azure Monitor integration. Portal dashboards. | WAC dashboards. SCOM for enterprise monitoring. Azure Monitor via Arc. Defender for Cloud. | Same as SAN. | All achieve adequate observability. Hyper-V may need more tool assembly. |

| Automation | Scriptability over GUI polish | Azure CLI, Azure PowerShell, ARM/Bicep/Terraform. | PowerShell-native. Deep WMI surface. SCVMM module. Full Hyper-V module. Arc REST APIs. | Same as SAN. | Hyper-V compares well. PowerShell was built for this. |

| RBAC and delegation | Controlled operations | Azure RBAC integrated. Built-in Azure Local roles. | SCVMM provides role-based delegation. AD-based permissions. | Same as SAN. | Azure Local has the modern RBAC story through Azure. Hyper-V needs SCVMM for comparable delegation. |

| Server roles on host | Running additional services alongside VMs | Hypervisor-only. Cannot run full Windows Server roles. | Full Windows Server roles available. File server, print server, DNS, DHCP—anything Windows Server supports alongside Hyper-V. | Same as SAN. | Hyper-V wins on host flexibility. Azure Local is locked to hypervisor-only operation. |

What Hyper-V Does That Azure Local Cannot

This summary table crystallizes the operational differences:

| Capability | Azure Local | Hyper-V + WSFC |

|---|---|---|

| Stretch Clusters | ✗ Dropped in 23H2 | ✓ Full support + Storage Replica |

| External SAN | ✗ S2D local disks only (FC preview) | ✓ FC, iSCSI, SMB3 — any SAN |

| Max Cluster Nodes | △ 16 nodes | ✓ 64 nodes (SAN) |

| Air-Gapped Operation | ✗ Azure connectivity required (reduced functionality mode if disconnected; Disconnected operations option available separately) | ✓ Fully offline, no dependency |

| Server Roles on Host | ✗ Hypervisor-only | ✓ Full Windows Server roles |

| Licensing Model | △ $10/core/mo subscription | ✓ Perpetual (buy once) |

| Hardware Requirement | ✗ Must be in Azure Local Catalog (Dell, HPE, Lenovo, DataON, Supermicro, and others; $200K+ typical) | ✓ Any Windows Server HCL ($0) |

| Guest VM Licensing | △ Per-physical-core subscription (covers unlimited guests) | ✓ Unlimited (Datacenter) |

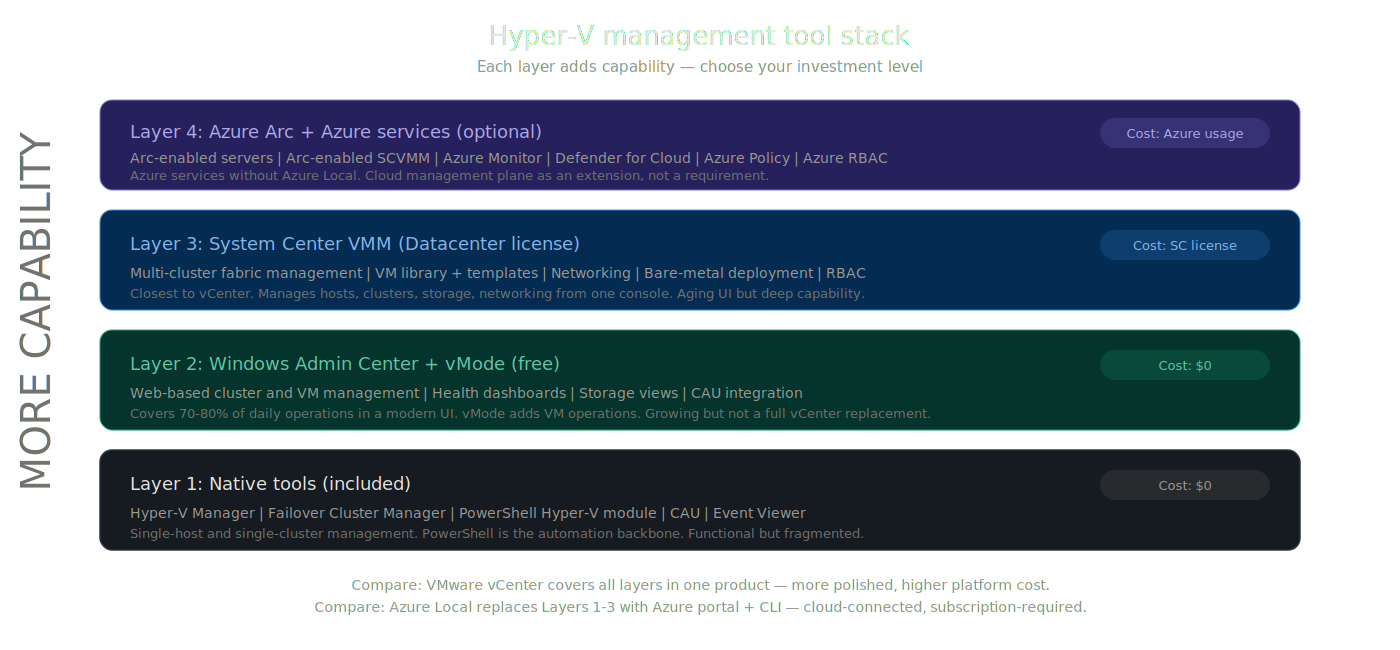

Management and Tooling Transparency Matrix

The master table shows what the platforms can do. This second table shows what it feels like to operate Hyper-V day to day.

One of the biggest reasons people default to VMware is the belief that Hyper-V lacks a credible operational control plane. The honest answer isn’t to pretend Hyper-V has a neat vCenter equivalent. It’s to show the management story in layers and let operators decide.

| Capability | VMware VCF | Azure Local | Hyper-V Native Only | Hyper-V + WAC | Hyper-V + SCVMM | Verdict |

|---|---|---|---|---|---|---|

| Primary management experience | vCenter—single pane. Mature, polished, deeply integrated. | Azure portal for VM and cluster lifecycle. CLI and PowerShell for ops. WAC preview via Arc. | Fragmented. Hyper-V Manager, FCM, PowerShell. | Improved. Web-based cluster and VM management. Covers 70-80% of daily tasks. WAC Virtualization Mode (vMode) adds modern VM operations. | Closest to vCenter. Multi-host, multi-cluster, VM library, networking, storage fabric. | VMware leads on polish. SCVMM is capable but aging. WAC + vMode is promising. Azure Local pushes to the Azure portal. |

| Cluster deployment | SDDC Manager automates bring-up. | Azure portal-driven. Cloud-validated. Hardware must match catalog. | Manual. PowerShell or FCM. Flexible but labor-intensive. | WAC assists cluster creation. Improves over pure manual. | SCVMM provides fabric onboarding and bare-metal deployment. | VCF and Azure Local more turnkey. Hyper-V more flexible but more engineering on day one. |

| Multi-cluster visibility | vCenter manages multiple clusters. Aria extends to fleet. | Azure portal fleet-level view across all Arc instances. | Weakest. Each cluster independent. | Limited per-gateway. | SCVMM manages multiple clusters. Arc-enabled SCVMM extends to Azure portal. | Hyper-V at scale needs SCVMM or Arc-enabled SCVMM. Native-only isn’t viable for multi-cluster. |

| Patching / lifecycle | VCF Lifecycle Manager. Tested bundles. | Azure Local Lifecycle Manager. Updates via Azure. | CAU handles rolling updates. Manual firmware/driver. | WAC surfaces CAU integration. | SCVMM can orchestrate. WSUS/SCCM integration. | Hyper-V patching works. Not as turnkey as VCF or Azure Local lifecycle managers. |

| Monitoring / health | vCenter alarms, Aria Operations. | Azure Monitor, portal health views. Cloud-connected. | Event logs, PerfMon, basic cluster health in FCM. | WAC provides cluster health dashboards, VM metrics, storage health. Solid improvement. | SCOM integration. SCVMM fabric health views. | Hyper-V achieves good observability, but from assembled parts, not one clean default pane. |

| Automation surface | PowerCLI, vSphere REST API, Terraform. Mature ecosystem. | Azure CLI, PowerShell, ARM/Bicep/Terraform. | PowerShell Hyper-V module is comprehensive. WMI surface. CIM cmdlets. Full cluster module. | WAC is GUI-focused—not the automation core. | SCVMM PowerShell module adds fabric automation. | Hyper-V’s automation story is genuinely strong. PowerShell was purpose-built for this. |

| RBAC / delegation | vCenter roles. Granular, well-documented. | Azure RBAC. Built-in roles. Cloud-native. | AD-based permissions. Limited granularity without SCVMM. | Gateway-level access control. Limited. | SCVMM self-service and delegated admin. Closer to vCenter delegation. | Azure Local has modern RBAC. VMware has mature local RBAC. Hyper-V needs SCVMM to compete. |

Hyper-V management tool stack: native → WAC → SCVMM → Arc

Hyper-V management tool stack: native → WAC → SCVMM → Arc

Operator Reality by Domain

Tables map capabilities. These sections answer the practical questions operators actually ask.

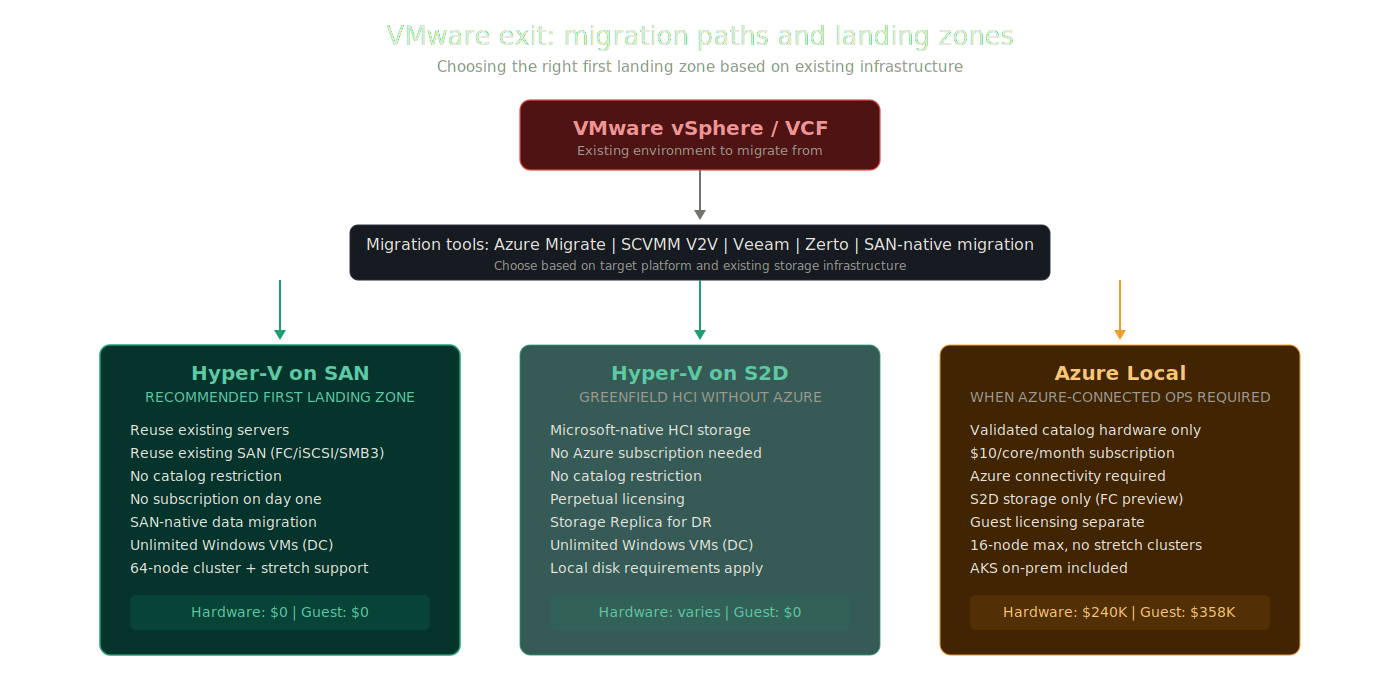

VM Migration: Getting Off VMware

VMware exit: migration paths and landing zone comparison

VMware exit: migration paths and landing zone comparison

The migration path from VMware to any Hyper-V target is well-established. The key tools:

Azure Migrate is Microsoft’s primary migration tool. It supports discovery of VMware environments, assessment of VM readiness, and agentless replication to Hyper-V targets, including Azure Local, Windows Server Hyper-V, and Azure IaaS.

SCVMM V2V conversion provides direct VMware-to-Hyper-V VM conversion. SCVMM connects to vCenter, selects VMs, and converts them to Hyper-V format.

Third-party tools like Veeam, Zerto, and Carbonite offer VMware-to-Hyper-V migration with pre-migration testing and rollback.

The critical question is: where does the migrated VM land?

With Hyper-V on SAN, the VM lands on existing SAN infrastructure. If the source VMware environment used the same SAN (extremely common), the storage migration may be as simple as re-presenting existing LUNs and converting VMDK to VHDX. Some array vendors (Pure Storage, NetApp, Dell) offer tools that move data at the storage layer, bypassing the hypervisor entirely. This is faster, less risky, and preserves existing storage tiering and replication policies.

With Azure Local, the VM lands on S2D storage inside the cluster. The source SAN cannot be reused directly unless it happens to be Dell PowerFlex or Pure Storage FlashArray (both in FC SAN preview as of November 2025). The hardware must be from the Azure Local catalog. This narrows the migration path and may force a hardware purchase before migration can begin.

With Hyper-V on S2D, the VM lands on S2D storage. No catalog restriction, but target hosts need local drives configured for S2D. More flexible than Azure Local, less flexible than SAN-backed Hyper-V.

Bottom line: Hyper-V on SAN provides the lowest-friction first landing zone for VMware exits. You reuse the servers, you reuse the SAN, you convert the VMs, and you’re running. Azure Local adds hardware procurement, catalog validation, and subscription enrollment before you move your first VM.

Quick Reference: VM Migration Comparison

| Migration Factor | Hyper-V on SAN | Hyper-V on S2D | Azure Local |

|---|---|---|---|

| Primary migration tools | Azure Migrate, SCVMM V2V, Veeam, Zerto | Azure Migrate, SCVMM V2V, Veeam, Zerto | Azure Migrate |

| Target storage | Existing SAN (FC, iSCSI, SMB3) | S2D local disks | S2D local disks (FC SAN in preview) |

| Hardware requirement | Any Windows Server HCL hardware | Any Windows Server HCL hardware | Must be in Azure Local Catalog (Dell, HPE, Lenovo, DataON, Supermicro, and others) |

| Array-native data mobility | ✓ Pure, NetApp, Dell, HPE tools | ✗ Not applicable | ✗ Not applicable |

| New hardware required day one | ✗ Reuse existing ($0) | ✗ Reuse existing ($0) | ✓ $200K–$500K+ |

| Existing SAN reuse | ✓ Full | ✗ Not designed for it | ✗ S2D only (FC preview) |

| Platform subscription before migration | ✗ None | ✗ None | ✓ $10/core/month from day 1 |

Storage Replication and Data Protection

Once VMs are on Hyper-V, the DR story is not just adequate. It is multi-layered in a way Azure Local cannot match.

Hyper-V Replica provides asynchronous VM replication built into Windows Server at no additional cost. It supports replication intervals of 30 seconds, 5 minutes, or 15 minutes. It works between any two Hyper-V hosts or clusters. It supports planned and unplanned failover with test failover capabilities. It is simple, proven, and free.

Azure Local has no equivalent. There is no Azure-Local-to-Azure-Local VM replication feature.

Storage Replica provides synchronous or asynchronous block-level replication between volumes. Available in both Datacenter edition (unlimited volumes and sizes) and Standard edition (single volume, 2 TB max per volume). The full-featured Datacenter implementation is the foundation of stretch cluster designs.

Array-native replication (Hyper-V on SAN) adds a third layer. Organizations running Pure Storage ActiveCluster, NetApp MetroCluster, Dell PowerMax SRDF, or HPE Peer Persistence maintain their existing storage-level replication alongside Hyper-V Replica and Storage Replica. Three independent replication layers, each solving a different RPO/RTO requirement.

Quick Reference: Data Protection Comparison

| DR Capability | Hyper-V on SAN | Hyper-V on S2D | Azure Local |

|---|---|---|---|

| VM-level replication | ✓ Hyper-V Replica (free, built-in) | ✓ Hyper-V Replica (free, built-in) | ✗ No native VM-to-VM replication |

| Volume-level replication | ✓ Storage Replica (sync/async) | ✓ Storage Replica (sync/async) | ✗ Not applicable |

| Array-native replication | ✓ Pure ActiveCluster, NetApp MetroCluster, Dell PowerMax SRDF, HPE Peer Persistence | ✗ Not applicable | ✗ Not applicable |

| RPO = 0 option | ✓ Array active/active (SAN-layer) | ✗ No | ✗ No |

| Azure-directed DR | ✓ ASR (optional add-on) | ✓ ASR (optional add-on) | ✓ ASR (primary path) |

| On-prem site-to-site DR | ✓ Multiple independent options | ✓ Storage Replica + Hyper-V Replica | ✗ No native path |

| Total replication layers | 3 (VM + volume + array) | 2 (VM + volume) | 1 (ASR only) |

Patching and Host Maintenance

All platforms support rolling maintenance: drain VMs, patch, bring back, next node. The difference is orchestration.

VCF provides the most integrated lifecycle through SDDC Manager and vSphere Update Manager, but remember, that management stack alone consumes 48 vCPUs, 194 GB RAM, and 3.2 TB in a minimal deployment, scaling to ~118 vCPUs and ~473 GB RAM in production HA.

Azure Local delivers updates through Lifecycle Manager from the Azure portal, integrating OS, drivers, and firmware per the OEM’s Solution Builder Extension. Smooth, but requires Azure connectivity.

Hyper-V uses Cluster-Aware Updating (CAU), which automates rolling updates across cluster nodes. CAU drains VMs, applies updates, reboots, and moves to the next node. It works. It’s not glamorous. SCVMM can add orchestration. WSUS or SCCM handle update sourcing.

Quick Reference: Patching and Maintenance Comparison

| Maintenance Factor | Hyper-V on SAN | Hyper-V on S2D | Azure Local |

|---|---|---|---|

| Rolling host updates | ✓ Cluster-Aware Updating (CAU) | ✓ Cluster-Aware Updating (CAU) | ✓ Azure Local Lifecycle Manager |

| OS + firmware + driver coordination | Manual / SCVMM / WSUS | Manual / SCVMM / WSUS | Integrated via OEM Solution Builder Extension |

| Azure connectivity required | ✗ No | ✗ No | ✓ Yes |

| Air-gap / offline patching | ✓ WSUS offline | ✓ WSUS offline | ✗ Not supported |

| Update tooling | CAU + WSUS/SCCM | CAU + WSUS/SCCM | Azure Lifecycle Manager (portal) |

| Per-node VM downtime | ✗ Rolling—no VM downtime | ✗ Rolling—no VM downtime | ✗ Rolling—no VM downtime |

Multi-Site Resilience

For organizations with two or more datacenters, Hyper-V on SAN offers the most flexible multi-site design:

- Stretch clustering with shared SAN (synchronous replication or dual-fabric SAN)

- Hyper-V Replica for async VM replication between any two sites

- Storage Replica for volume-level sync/async replication

- Array-native replication (Pure ActiveCluster, NetApp MetroCluster, etc.) for storage-layer DR

- Azure Site Recovery for Azure-directed recovery when cloud DR is desired

Azure Local’s multi-site story is constrained: no stretch clusters since 23H2, no Azure-Local-to-Azure-Local VM replication, and ASR is Azure-directed (failover goes to Azure, not to a second on-prem site).

Quick Reference: Multi-Site Resilience Comparison

| Multi-Site Capability | Hyper-V on SAN | Hyper-V on S2D | Azure Local |

|---|---|---|---|

| Stretched / multi-site cluster | ✓ WSFC with shared SAN or Storage Replica | ✓ S2D stretch with Storage Replica (WS2019+) | ✗ Dropped in 23H2 (2311) |

| VM replication across sites | ✓ Hyper-V Replica (free, built-in) | ✓ Hyper-V Replica (free, built-in) | ✗ No native path |

| Storage replication | ✓ Storage Replica + array-native | ✓ Storage Replica | ✗ No on-prem-to-on-prem path |

| Automatic site failover | ✓ WSFC site policy + array active/active | ✓ WSFC site policy | ✗ Not supported (ASR is Azure-directed) |

| Azure-directed DR | ✓ ASR (optional add-on) | ✓ ASR (optional add-on) | ✓ ASR (primary path) |

| On-prem-to-on-prem recovery | ✓ Multiple independent options | ✓ Storage Replica path | ✗ Not supported |

Five-Year TCO Reality Check

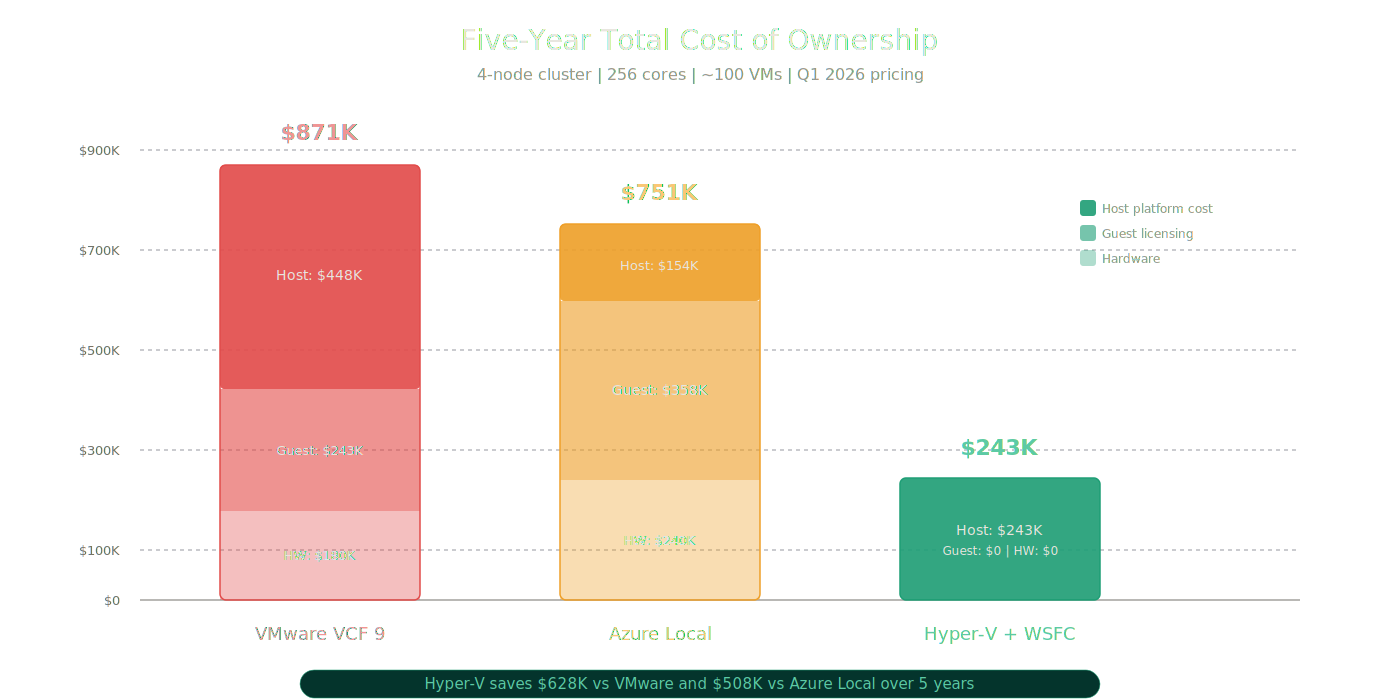

This section uses the actual calculated figures from the TCO model, not generic estimates.

The Scenario

- 4 nodes, dual-socket, 32 cores per socket (64 cores per node, 256 total cores)

- ~100 Windows Server VMs

- Pricing approximate, Q1 2026

VMware VCF 9

| Line Item | Detail |

|---|---|

| Cost Model | Subscription ($350/core/yr) |

| Annual Host Cost | $89,600/year (host hypervisor rent only) |

| 5-Year Host Cost | $448,000 (strictly the VCF stack) |

| Guest Licensing | ~$108,336 upfront + ~$27,000/year (Windows Datacenter buy-in + SA) |

| 5-Year Guest Cost | ~$243,336 |

| Hardware | ~$180,000 (requires new All-NVMe ReadyNodes) |

| SAN Support | Yes — FC, NFS, vVols (+ vSAN included) |

| Max Nodes | 64 |

| 5-Year Total Cost of Ownership | ~$871,336 (Host + Guest + Hardware) |

Azure Local

| Line Item | Detail |

|---|---|

| Cost Model | Hybrid Subscription ($10/core/month) |

| Annual Host Cost | $30,720/year (host fee only) |

| 5-Year Host Cost | $153,600 (strictly the host rent) |

| Guest Licensing | ~$71,500/year (Windows Server subscription at $23.30/physical core/month × 256 cores — covers unlimited Windows Server guest VMs) |

| 5-Year Guest Cost | ~$357,500 |

| Hardware | ~$240,000 (requires new validated All-NVMe nodes) |

| SAN Support | No — S2D local disks only |

| Max Nodes | 16 (no stretch clusters) |

| 5-Year Total Cost of Ownership | ~$751,100 (Host + Guest + Hardware) |

Important: Azure Hybrid Benefit can dramatically change this math. If the customer already holds Windows Server Datacenter licenses with active Software Assurance (the same assumption used in the Hyper-V column below), Azure Hybrid Benefit waives both the $10/core/month host fee and the Windows Server guest subscription. Under AHB, the Azure Local 5-year cost becomes: $240,000 (hardware) + $0 (host) + $0 (guest) = ~$240,000. This makes Azure Local cost-competitive with Hyper-V on SAN when existing hardware cannot be reused. The TCO table above shows Azure Local without AHB to illustrate the cost for customers who do not have existing Datacenter SA licenses. Both scenarios should be evaluated.

Hyper-V + WSFC (Windows Server Datacenter)

| Line Item | Detail |

|---|---|

| Cost Model | Annual Enrollment (EA / SA) |

| Upfront Buy-in | ~$108,336 (Datacenter host core licenses) |

| Annual Cost | ~$27,000/year (SA for support) |

| 5-Year Host Cost | ~$243,336 (upfront license + 5yr SA) |

| Guest Licensing | $0 extra — already covered in your upfront host buy-in |

| 5-Year Guest Cost | $0 |

| Hardware | $0 — reuse existing servers w/ HBA |

| SAN Support | Yes — FC, iSCSI, SMB3 |

| Max Nodes | 64 + full stretch cluster support |

| 5-Year Total Cost of Ownership | ~$243,336 (Host + Guest + Hardware) |

Five-year TCO comparison across all three platforms

Five-year TCO comparison across all three platforms

Five-year TCO stacked cost breakdown: Host + Guest + Hardware

Five-year TCO stacked cost breakdown: Host + Guest + Hardware

The TCO Verdict

Hyper-V lowers 5-year TCO by $620K+ vs VMware and $500K+ vs Azure Local.

The three cost advantages that drive this:

Windows Server Datacenter includes unlimited Windows Server guest VMs. Neither VCF nor Azure Local includes guest OS licensing. Azure Local’s guest licensing alone costs $357,500 over five years in this scenario, more than Hyper-V’s entire platform cost.

Hyper-V on SAN reuses existing hardware. No catalog lock, no net-new validation purchase. VCF requires new ReadyNodes ($180K). Azure Local requires new validated nodes ($240K). Hyper-V requires $0 in new hardware.

No recurring platform subscription. Windows Server is a perpetual license. SA is optional and provides upgrade rights and support, but the platform does not stop functioning if you stop paying. Both VCF and Azure Local are subscription-only. Stop paying, stop running.

These advantages are most pronounced in environments running primarily Windows workloads with existing SAN infrastructure, which describes a large percentage of the organizations currently reassessing their VMware position.

The Transparency Section

If the post stopped here, it would be a Hyper-V sales pitch. It would also be dishonest. The credibility of this argument depends on acknowledging where Hyper-V is weaker.

1. Hyper-V management is weaker than vCenter as a unified day-to-day experience.

This is real. vCenter gives administrators a single console for clusters, VMs, storage, networking, monitoring, and lifecycle management. Hyper-V operators split their time across Failover Cluster Manager, Windows Admin Center, SCVMM (if deployed), PowerShell, and potentially Azure portal through Arc. No single tool covers everything vCenter does in one place.

2. Microsoft failed to evolve SCVMM into what the market needed, and the consequences are real.

The vSphere 7 and 8 era gave VMware customers a formidable management and feature stack, built piece by piece, but deeply integrated when assembled: vCenter Server as the polished management plane, VMware NSX-T for distributed networking and micro-segmentation, vSAN for hyperconverged storage, VMware Site Recovery Manager (SRM) for automated site failover DR, and vRealize Operations Manager for capacity planning, trending, and analytics. Microsoft’s answer to that stack was SCVMM, and Microsoft chose not to build it to the same level.

SCVMM still works. It still has capabilities nothing else in the Microsoft stack replicates: service templates, VM library management, fabric management, multi-cluster operations, bare-metal deployment. But it never became a credible enterprise multi-tenancy platform. That is why large enterprises and MSPs building multi-tenant private clouds (the ones who need tenant isolation, delegated self-service portals, and deep network segmentation at scale) found Hyper-V a poor fit by comparison. That is a Microsoft product investment failure, not a Hyper-V platform failure. The practical consequence for those customer segments, however, is the same.

The honest VMware cost counterpoint: Before crediting VMware with solving what SCVMM did not, the price of that solution was significant when assembled outside of VCF. A full modern vSphere 8 environment required not just vCenter Server, but vSAN (a high per-core add-on), NSX-T for distributed networking (one of the most expensive VMware add-ons, sold separately), SRM requiring licenses on both the protected and recovery sites, and vRealize Operations as yet another separate subscription. Assembling that stack for a medium enterprise meant $150–$250 per core per year before hardware. VCF 9 bundles all of this, but Broadcom’s bundling means you now pay for VMware NSX, vSAN, VCF Operations, and VCF Fleet Management whether you use them or not, at VCF 9’s pricing floor with a 72-core-per-socket minimum.

Note for MSPs and hosters: VCF 9.0 dropped VMware Cloud Director (VCD) support entirely with no migration path. VCD was the primary multi-tenancy and tenant isolation layer that MSPs and hosting providers relied on for building vSphere-based multi-tenant clouds. VCF 9’s replacement is its own “Sovereign Multi-Tenancy” model. If you operated a VCD-based multi-tenant cloud, you are facing a forced architectural redesign, not just a price increase. This context matters when MSPs evaluate Hyper-V as a VCF alternative: the VMware multi-tenancy story they depended on no longer exists in VCF 9.

3. Windows Admin Center shows future promise but does not equal present-day parity.

WAC has improved meaningfully as a cluster and VM management interface. WAC Virtualization Mode (vMode) adds modern VM operations capabilities at no cost. The preview integration with Azure Local through Arc shows a direction that could eventually provide a modern, web-based management experience. But today, WAC does not replace SCVMM, does not replace Failover Cluster Manager for all scenarios, and is not a vCenter equivalent. Treating WAC as the answer to the management gap overstates where it is today.

4. Hyper-V wins on flexibility and economics more than on polish.

This is the honest frame. Hyper-V’s advantage is that it does what you need for less money, with more hardware flexibility, and more storage freedom. Its disadvantage is that the operational experience is less integrated and requires more tool-layer decisions. For organizations that value polish over economics, VMware may still be the better fit, but those organizations need to be honest about what that polish costs.

5. Azure Local has legitimate strengths.

Azure Local is not a bad product. It is a real, capable platform with genuine value for the right customer profile. The transparency section would be incomplete without acknowledging that.

6. Native reporting and capacity planning are a DIY exercise on Hyper-V.

Hyper-V has no native capacity trending, performance analytics dashboards, or executive reporting equivalent to VMware Aria Operations (VCF Operations in VCF 9). Achieving comparable visibility on a Hyper-V environment requires deliberate assembly: Azure Monitor workbooks, System Center Operations Manager (SCOM), or third-party tools such as VirtualMetric or Turbonomic. Azure Local customers gain Azure portal dashboards and Azure Monitor integration automatically. Traditional Hyper-V requires those integrations to be built by the operations team. This is a real operational gap, not a philosophical one, and it particularly affects larger environments where capacity trending, chargeback reporting, and budget justification matter.

Where Azure Local Actually Fits

Azure Local is the right answer when the customer explicitly wants what Azure Local uniquely provides:

- A validated, Azure-managed HCI platform with a single operational model from Microsoft and the OEM. Customers who want turnkey HCI with cloud-connected lifecycle management will find Azure Local delivers exactly that.

- Azure-aligned identity, policy, and governance through native Arc integration. Organizations standardizing on Azure RBAC, Azure Policy, and Azure Monitor as their management plane will find Azure Local naturally integrated.

- Azure Kubernetes Service on-premises. Azure Kubernetes Service (AKS) enabled by Azure Arc on Azure Local, known as AKS Arc, provides on-premises Kubernetes with Azure Resource Manager-managed lifecycle, including Arc-native deployment, updates, and monitoring. This is a genuine differentiator for organizations standardizing on Kubernetes with Azure-managed operations. AKS Arc is included at no additional charge on Azure Local with the 2402 release and later (effective January 2025). Transparency note: AKS Arc on Windows Server (standalone, not Azure Local) is being retired in stages. Windows Server 2019 node pool support ended March 2026; Windows Server 2022 node pool support ends March 2027; Windows Server 2019, 2022, and 2025 as host operating systems are all being deprecated by March 2028. The full AKS Arc architecture on Windows Server is being wound down. AKS Arc on Azure Local remains the fully supported and recommended path going forward.

- A greenfield HCI deployment with no existing SAN investment where the customer wants Microsoft-native clustered storage with cloud management.

- Subscription-model preference. Some organizations genuinely prefer OpEx subscription billing over perpetual CapEx licensing.

Azure Local does not fit well when:

- The primary goal is to reuse existing servers and SAN infrastructure

- The migration depends on array-native storage mobility or replication

- Site-to-site VM replication is needed without Azure-directed recovery

- Recurring platform subscription costs must be avoided

- Continuous Azure connectivity cannot be guaranteed

- Hardware is not in the Azure Local catalog and a forced refresh would break the migration budget

- Full Windows Server roles are needed on the host alongside VMs

The distinction matters because Microsoft’s sales motion does not always draw this line clearly. Azure Local should be positioned as the right answer for customers who explicitly need its Azure-connected operating model, not as the answer for everyone who simply wants to leave VMware.

The Final Verdict

Stop assuming the only serious post-VMware answers are “buy the VCF stack” or “move to Azure Local.”

Start by asking whether Hyper-V on SAN or Hyper-V on S2D already gives you the operational outcomes you actually need (VM provisioning, live migration, rolling maintenance, DR, storage flexibility, Windows guest licensing) with less forced spend, less hardware churn, and more flexibility.

The buying logic:

If you own existing servers and SAN infrastructure, Hyper-V on SAN is the most economical and least disruptive first choice. You reuse the hardware. You reuse the storage. You get unlimited Windows Server guest licensing with Datacenter. You keep Hyper-V Replica, Storage Replica, and array-native replication for DR. You add Arc for Azure services if and when you want them, not because the platform forces you. Five-year savings: $620K+ versus VCF, $500K+ versus Azure Local.

If you want Microsoft-native HCI without Azure overhead, Hyper-V on S2D gives you Storage Spaces Direct on Windows Server 2025 without the Azure Local subscription, hardware catalog lock, or cloud connectivity dependency.

If you explicitly want Azure-connected operations, AKS on-premises, or validated HCI with cloud lifecycle management, Azure Local is a strong choice for those specific requirements.

If you need the absolute highest management polish today, VMware VCF still delivers that, at a cost that has become increasingly difficult to justify, and with a management overhead that now consumes 48–118 vCPUs, 194–473 GB RAM, and 3.2–5.7 TB (Simple to HA) before you run your first workload.

The server stays. The SAN stays. The network stays. Only the hypervisor changes.

For many organizations, Hyper-V is not the compromise answer. It is not the fallback. It is not the nostalgia play.

It is the smarter first choice.

Resources

Microsoft Documentation

- Windows Server 2025 Hyper-V — What’s New

- Hyper-V Scalability Targets

- Storage Spaces Direct Overview

- Hyper-V Replica Overview

- Storage Replica Overview

- Storage Replica — FAQ (Standard vs Datacenter editions)

- Azure Local — What Is It?

- Azure Local — Compare Azure Local to Windows Server

- Azure Local — FAQ

- Azure Local VM Management Overview

- Azure Local External Storage Support (Preview)

- Azure Local Pricing

- Azure Local — Disconnected Operations

- AKS enabled by Azure Arc — Supported Kubernetes Versions

- AKS on Windows Server Retirement Notice

- Arc-Enabled SCVMM

- Cluster-Aware Updating

- Network ATC Overview

- GPU Partitioning and Live Migration (NVIDIA vGPU requirement)

WAC Virtualization Mode (vMode) Blog Series — Microsoft Tech Community

- Part 1: Virtualization Mode Unlocked

- Part 2: Windows Admin Center Architectural Changes

- Part 3: WAC Virtualization Mode Preview Build Updated

- Part 4: Installing WAC Virtualization Mode

- Part 5: Resource Onboarding using vMode

- Part 6: Configuring Network ATC in vMode

- Part 7: Configuring Storage for Hyper-V Hosts in vMode

- Part 8: Configuring Compute in vMode

VMware / Broadcom Documentation

- VCF and VVF Core Licensing Counting (KB 95927)

- VMware Cloud Foundation Overview

- VMware Cloud Foundation 9.0 Official Documentation

- VCF 9.0 General FAQ

- VCF 9.0 Deployment Pathways

VCF 9 Sizing and Lab Resources

- Minimal Resources for Deploying VCF 9.0 in a Lab — William Lam, Broadcom

- Ultimate Lab Resource for VCF 9.0 — William Lam, Broadcom

Industry Research

Storage Vendor Documentation

Disclaimer: Pricing estimates use publicly available list rates as of Q1 2026 and are intended for directional comparison. Actual costs vary by Enterprise Agreement, LSP negotiation, and regional pricing. Feature availability is based on current Microsoft and Broadcom documentation. Azure Local external SAN support via Fibre Channel is in limited preview as of this writing. Always verify current capabilities and pricing against official vendor documentation for your specific deployment.